Brocade Openstack VDX Plugin (AMPP)

This describes the setup of Openstack Plugins for Brocade VDX devices.

|

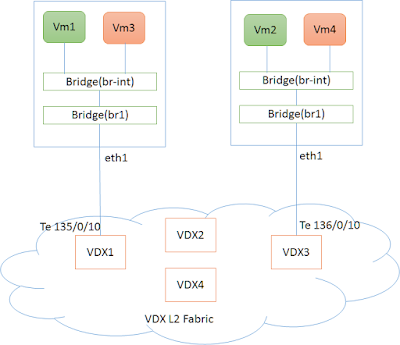

| Fig 1. Setup of VDX Fabric with Compute Nodes |

The figure(fig 1) shows a typical Physical deployment of Servers(Compute Nodes) connected to VDX L2 Fabric.

eth1 on the compute Node is connected to VDX interface (e.g Te 135/0/10) and eth1 is part of an OVS bridge br1.

Note: To create bridge br1 on compute Nodes and add port eth1 to it.

sudo ovs-vsctl add-br br1

sudo ovs-vsctl add-port br1 eth1

sudo ovs-vsctl add-br br1

sudo ovs-vsctl add-port br1 eth1

Setup of Openstack Plugin

Pre-requisites

git clone https://github.com/brocade/ncclient

cd ncclient

sudo python setup.py installUpgrade the Database

neutron-db-manage --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head

Upgrade the database so that Brocade specific table entries are created in neutron database

Layer 2 and Layer 3 Networking using AMPP (Automatic Migration of Port Profiles)

Brocade VDX devices has a feature by the name AMPP.

Openstack Configurations (AMPP L2 Setup)

Openstack configurations become simple on using AMPP for L2 and L3 Networking. In this section we would describe the configuration steps required to enable this feature from Openstack Neutron.

Following configuration lines needs to be available in '/etc/neutron/plugins/ml2/ml2_conf.ini'

[ml2]

tenant_network_types = vlan

type_drivers = vlan

mechanism_drivers = openvswitch,brocade_ampp

[ml2_type_vlan]

network_vlan_ranges = physnet1:2:500

[ovs]

bridge_mappings = physnet1:br1

- mechanism driver needs to be set to 'brocade_ampp' along with openvswitch.- 'br1' is the openvswith bridge.

- '2:500' is the vlan range used

Following configuration lines needs to be added to either '/etc/neutron/plugins/ml2/ml2_conf_brocade.ini' or '/etc/neutron/plugins/ml2/ml2_conf.ini'.

If added to '/etc/neutron/plugins/ml2/ml2_conf_brocade.ini' then this file should be given as config parameter during neutron-server startup.

[ml2_brocade]

username = admin

password = password

address = 10.37.18.139

ostype = NOS

physical_networks = physnet1

osversion=5.0.0

initialize_vcs = True

nretries = 5

ndelay = 10

nbackoff = 2

Here,

[ml2_brocade] - entries

[ml2_brocade] - entries

- 10.37.18.139 is the VCS Virtual IP (IP for the L2 Fabric).

- osversion - NOS version on the L2 Fabric.

- nretries - number of netconf to the switch will be retried in case of failure

- ndelay - time delay in seconds between successive netconf commands in case of failure

VDX Configurations

Put all the interfaces connected to compute node in port-profile mode. This is a one-time configuration. (Te 135/0/10 and Te 136/0/10 in the topology above).

sw0(config)# interface TenGigabitEthernet 135/0/10

sw0(conf-if-te-135/0/10)# port-profile-port

sw0(config)# interface TenGigabitEthernet 136/0/10

sw0(conf-if-te-136/0/10)# port-profile-port

Sample Setup commands

Create a GREEN network (10.0.0.0/24).

user@controller:~$ neutron net-create GREEN_NETWORKuser@controller:~$ neutron subnet-create GREEN_NETWORK 10.0.0.0/24 --name GREEN_SUBNET --gateway=10.0.0.1

user@controller:~$ neutron net-show GREEN_NETWORK

+---------------------------+--------------------------------------+| Field | Value |+---------------------------+--------------------------------------+| admin_state_up | True || availability_zone_hints | || availability_zones | nova || created_at | 2016-04-11T10:11:21 || description | || id | d6a46093-a639-4fb8-9b7d-64aa0743a45d || ipv4_address_scope | || ipv6_address_scope | || mtu | 1500 || name | GREEN_NETWORK || port_security_enabled | True || provider:network_type | vlan || provider:physical_network | physnet1 || provider:segmentation_id | 86 || router:external | False || shared | False || status | ACTIVE || subnets | 0f19fba0-0e1c-4661-a893-e83c43ec269c || tags | || tenant_id | ed2196b380214e6ebcecc7d70e01eba4 || updated_at | 2016-04-11T10:11:21 |

+---------------------------+--------------------------------------+

GREEN_Network is having segmentation _id of 86 and network_type is vlan.

d6a46093-a639-4fb8-9b7d-64aa0743a45d is the net-id used in the boot commands below

d6a46093-a639-4fb8-9b7d-64aa0743a45d is the net-id used in the boot commands below

Check the availability Zones, We will launch one VM each on one of the servers.

user@controller:~$ nova availability-zone-list+-----------------------+----------------------------------------+| Name | Status |+-----------------------+----------------------------------------+| internal | available || |- controller | || | |- nova-conductor | enabled :-) 2016-04-11T05:10:06.000000 || | |- nova-scheduler | enabled :-) 2016-04-11T05:10:07.000000 || | |- nova-consoleauth | enabled :-) 2016-04-11T05:10:07.000000 || nova | available || |- compute | || | |- nova-compute | enabled :-) 2016-04-11T05:10:10.000000 || |- controller | || | |- nova-compute | enabled :-) 2016-04-11T05:10:05.000000 |+-----------------------+----------------------------------------+

Boot VM1 on Server by the name "controller"

user@controller:~$nova boot --nic net-id="d6a46093-a639-4fb8-9b7d-64aa0743a45d" --image cirros-0.3.4-x86_64-uec --flavor m1.tiny --availability-zone nova:controller VM1

Boot VM2 on Server by the name "compute"

user@controller:~$ nova boot --nic net-id="d6a46093-a639-4fb8-9b7d-64aa0743a45d" --image cirros-0.3.4-x86_64-uec --flavor m1.tiny --availability-zone nova:compute VM2

On VDX, MAC address of the Virtual Machines (VM1 and VM2) would be associated to port-profile.

sw0(conf-if-te-136/0/10)# do show port-profile statusPort-Profile PPID Activated Associated MAC InterfaceUpgradedVlanProfile 1 No None Noneopenstack-profile-86 2 Yes fa16.3e4b.a93b Nonefa16.3e94.a0cc Nonefa16.3e97.88e3 None

At this stage, Virtual Machines would be able to ping each other,

|

| Ping from VM2 to VM3 |

No comments:

Post a Comment